Private linking an Azure Container App Environment

As of February 2022 Container Apps custom virtual network requires a subnet size of at least /21. This post shows how to connect such a Container App.

this version is reworked for the final Container Apps namespace

Microsoft.App

This week virtual network integration and with that a bring-your-own virtual network capability was made available for Container App Environments: https://techcommunity.microsoft.com/t5/apps-on-azure-blog/azure-container-apps-virtual-network-integration/ba-p/3096932

As mentioned in my Container Apps post last week this virtual network integration is elementary in my scenario to migrate workloads from currently VNET integrated Service Fabric clusters to Container Apps.

Unfortunately - I guess for the moment until scaling behavior is more nuanced - subnets with a CIDR size of /21 or larger are required: https://docs.microsoft.com/en-us/azure/container-apps/vnet-custom?tabs=bash&pivots=azure-portal#restrictions

Still I want to continue exploring Container Apps for our scenario and so I mixed in some private link and private DNS magic I already used a while back.

Solution Elements

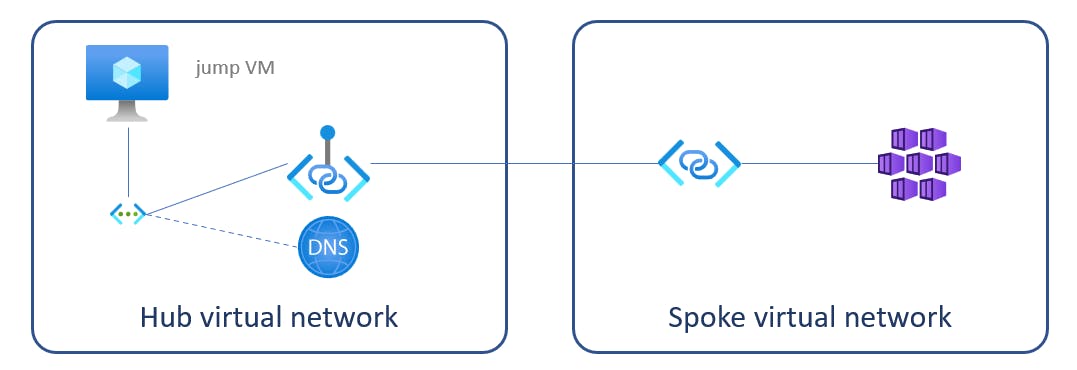

To simplify terms for this post I assume the corporate network with the limited address space would be the hub network and the virtual network containing Container Apps (with the "huge" address spaces) would be spoke network.

This is the suggested configuration:

- a private link service within spoke network linked to the

kubernetes-internalLoad balancer - a private endpoint in the hub network linked to private link service above

- a private DNS zone with the Container Apps domain name and a

*Arecord pointing to the private endpoint's IP address - a jump VM in the hub network to test service invocation

DISCLAIMER: the approach in this article is based on the assumption, that the underlying AKS node resource group is visible, exposed and the name matches the environments domain name (in my sample configuration domain was

redrock-70deffe0.westeurope.azurecontainerapps.iowhich resulted in node pool resource groupMC_redrock-70deffe0-rg_redrock-70deffe0_westeurope) which in turn allows one to find thekubernetes-internalILB to create the private endpoint; checking with the Container Apps team at Microsoft, this assumption still shall be valid after GA/General Availability

Below I will refer to shell scripts and Bicep templates I keep in this repository path: https://github.com/KaiWalter/container-apps-experimental/tree/ca-private-link/ca-bicep.

Prerequisites

- Azure CLI version >=2.34.1, containerapp extension >= 0.1.0

- Bicep CLI version >=0.4.1272

- .NET Core 3.1 + 6.0 SDK for application build and deployment

Why mixing Bicep and Azure CLI?

I handled deployment for my previous post with Pulumi, yet had to turn to Bicep for this deployment as the InternalLoadBalancerEnabled = true setting was not processed correctly (tested with Pulumi.Native 1.56.0-alpha.1643832293). Although I personally prefer the descriptive style of Bicep over a long string of Azure CLI commands and have it processed in a linked set of templates, I had to fall back to the CLI as not yet all Container App properties like staticIp and defaultDomain could be processed as Bicep outputs.

Stage 1 - deploy network and Container Apps environment

The first section of deploy.sh together with main.bicep deploys

- hub and spoke network with

network.bicep - logging workspace and Application Insights with

logging.bicep - Container App Environment connected to spoke network and an internal load balancer with

environment.bicep - a jump VM with

vm.bicep - a Container Registry with

cr.bicepfor later application deployments

if [ $(az group exists --name $RESOURCE_GROUP) = false ]; then

az group create --name $RESOURCE_GROUP --location $LOCATION

fi

if [ "$1" == "" ]; then

SSHPUBKEY=$(cat ~/.ssh/id_rsa.pub) # create with ssh-keygen first

else

SSHPUBKEY=

fi

az deployment group create --resource-group $RESOURCE_GROUP \

--template-file main.bicep \

--parameters environmentName=$ENVIRONMENTNAME \

adminPasswordOrKey="$SSHPUBKEY" \

--query properties.outputs

for the VM deployment it is assumed that a

id_rsaandid_rsa.pubkey pair is generated withssh-keygenor available on~/.ssh

Stage 2 - Private Link Service and Private Endpoint

Here the static IP of the Container App Environment is used to find the corresponding Internal loadbalancer's Frontend IP configuration. This is not the most elegant and reliable way, but should do it until I find a better reference from Container App Environment to Frontend IP configuration.

With privatelink.bicep these elements are created:

- a Private link service in the spoke network connected to Internal loadbalancer's Frontend IP configuration

- a Private endpoint in the hub network connected to the Private link service

ENVIRONMENT_STATIC_IP=`az containerapp env show -n $ENVIRONMENTNAME -g $RESOURCE_GROUP --only-show-errors --query properties.staticIp -o tsv`

ENVIRONMENT_DEFAULT_DOMAIN=`az containerapp env show -n $ENVIRONMENTNAME -g $RESOURCE_GROUP --only-show-errors --query properties.defaultDomain -o tsv`

ENVIRONMENT_CODE=`echo $ENVIRONMENT_DEFAULT_DOMAIN | grep -oP "^[a-z0-9\-]{1,25}"`

echo $ENVIRONMENT_STATIC_IP $ENVIRONMENT_DEFAULT_DOMAIN $ENVIRONMENT_CODE

CLUSTER_RG=`az group list --query "[?contains(name, '$ENVIRONMENT_CODE')].name" -o tsv`

ILB_FIP_ID=`az network lb show -g $CLUSTER_RG -n kubernetes-internal --query "frontendIpConfigurations[0].id" -o tsv`

echo $CLUSTER_RG $ILB_FIP_ID

VNET_SPOKE_ID=`az network vnet list --resource-group ${RESOURCE_GROUP} --query "[?contains(name,'spoke')].id" -o tsv`

SUBNET_SPOKE_JUMP_ID=`az network vnet show --ids $VNET_SPOKE_ID --query "subnets[?name=='jump'].id" -o tsv`

echo $VNET_SPOKE_ID $SUBNET_SPOKE_JUMP_ID

VNET_HUB_ID=`az network vnet list --resource-group ${RESOURCE_GROUP} --query "[?contains(name,'hub')].id" -o tsv`

SUBNET_HUB_JUMP_ID=`az network vnet show --ids $VNET_HUB_ID --query "subnets[?name=='jump'].id" -o tsv`

echo $VNET_HUB_ID $SUBNET_HUB_JUMP_ID

az deployment group create --resource-group $RESOURCE_GROUP \

--template-file privatelink.bicep \

--parameters "{\"subnetSpokeId\": {\"value\": \"$SUBNET_SPOKE_JUMP_ID\"},\"subnetHubId\": {\"value\": \"$SUBNET_HUB_JUMP_ID\"},\"loadBalancerFipId\": {\"value\": \"$ILB_FIP_ID\"}}"

Stage 3 - Private DNS Zone

Finally in privatedns.bicep

- a private DNS zone with the domain name of the Container App Environment

- a

Arecord pointing to the Private endpoint - virtual network link of the private DNS zone to the hub network

is created.

PEP_NIC_ID=`az network private-endpoint list -g $RESOURCE_GROUP --query "[?name=='pep-container-app-env'].networkInterfaces[0].id" -o tsv`

PEP_IP=`az network nic show --ids $PEP_NIC_ID --query ipConfigurations[0].privateIpAddress -o tsv`

az deployment group create --resource-group $RESOURCE_GROUP \

--template-file privatedns.bicep \

--parameters "{\"pepIp\": {\"value\": \"$PEP_IP\"},\"vnetHubId\": {\"value\": \"$VNET_HUB_ID\"},\"domain\": {\"value\": \"$ENVIRONMENT_DEFAULT_DOMAIN\"}}"

Add some apps

build.sh is used to deploy 2 ASP.NET Core apps which provide some basic Dapr service-to-service invocation calls.

Testing the approach

test.sh uses the jump VM to test the direct invocation and the service-to-service invocation of both apps:

app1

{"status":"OK","assembly":"app1, Version=1.0.0.0, Culture=neutral, PublicKeyToken=null","instrumentationKey":null} <<-- check app1 own health

{"status":"OK","assembly":"app2, Version=1.0.0.0, Culture=neutral, PublicKeyToken=null","instrumentationKey":null} <<-- check app1 remote health

app2

{"status":"OK","assembly":"app2, Version=1.0.0.0, Culture=neutral, PublicKeyToken=null","instrumentationKey":null} <<-- check app2 own health

{"status":"OK","assembly":"app1, Version=1.0.0.0, Culture=neutral, PublicKeyToken=null","instrumentationKey":null} <<-- check app2 remote health

Conclusion

I hope that the BYO virtual network footprint of Container Apps will be reduced before going into GA - one of the main reasons Function / App service deployment do not really work for our ~200 (~40 apps, 5 deployment stages) Function App microservices scenario.

Nevertheless with the private link approach - separating corporate address space from some virtual address space without bringing in one of the more heavier resources like Azure Firewall or VPN gateway - would be a valid option for me.